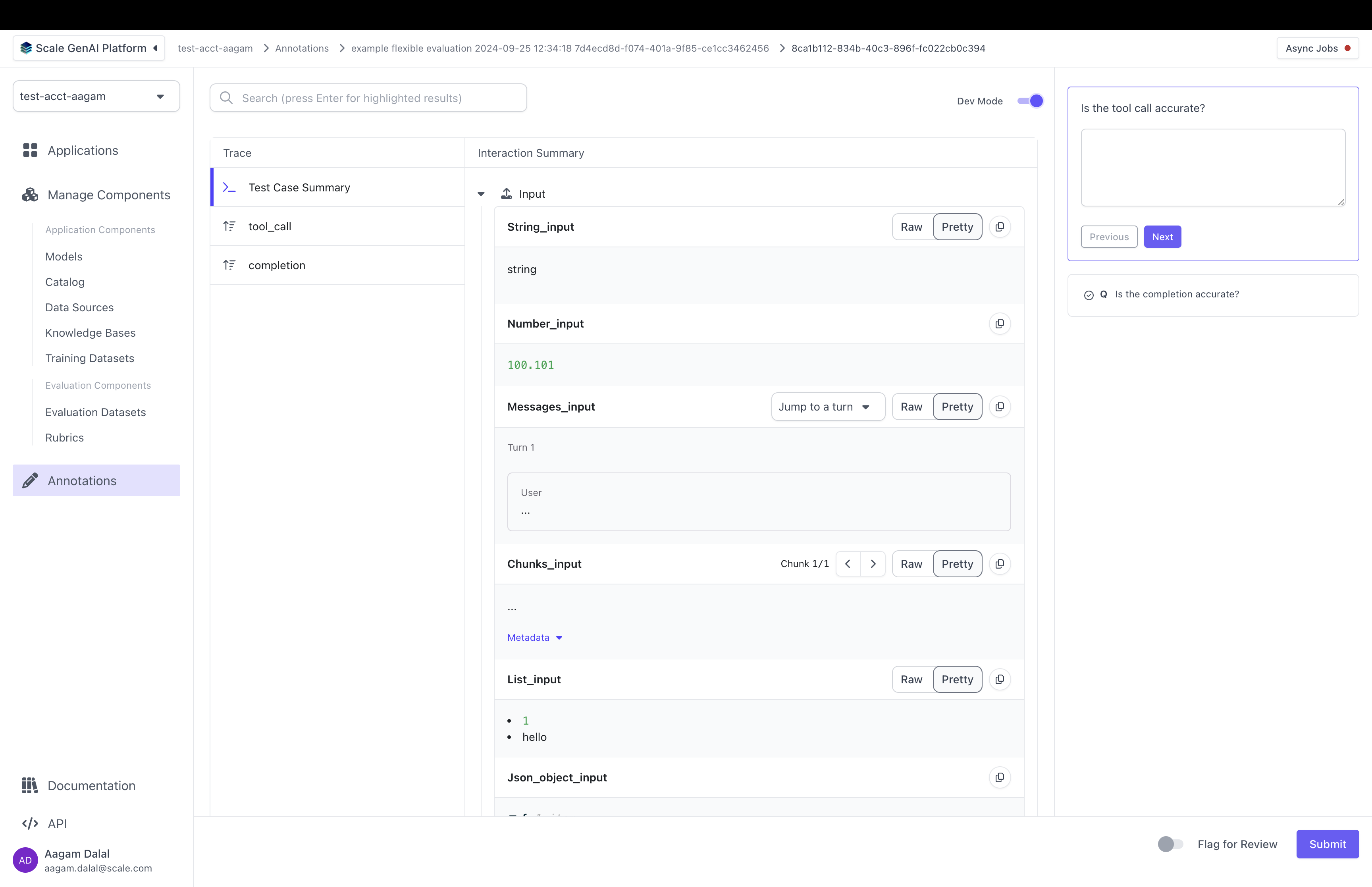

- display data from the trace

- select which parts of test case inputs and test case outputs to display

- modify the layout the annotation UI

annotation_config_type: by default this is “flexible”. The other types are “summarization” and “multiturn” which make it easier to work with specific use casescomponents: this is a 2D list of annotation items. Each annotation item points to somewhere in the test case data, test case output, or trace. When the annotator grades the test case output, they will see data pulled from each location- Each annotation item has a “data_loc” field and an optional “label” field. The “data_loc” is an array that points to where annotation data should be pulled from. The “label” is a name to be displayed to a user for the “data_loc”.

⚠️ if a “data_loc” points somewhere that doesn’t exist for one or more test cases, you will not be able to create the evaluation.

- Each annotation item has a “data_loc” field and an optional “label” field. The “data_loc” is an array that points to where annotation data should be pulled from. The “label” is a name to be displayed to a user for the “data_loc”.

direction: by default “row”. Decides whether components are laid out as rows or as columns

data_locs can take any of these shapes:

data_locator Helper | data_loc array | Meaning |

|---|---|---|

data_locator.test_case_data.input | ["test_case_data", "input"] | Display the entire input from the test case |

data_locator.test_case_data.input["\<input key>"] | ["test_case_data", "input", "\<input key>"] | Displays a single key from the input |

data_locator.test_case_data.expected | ["test_case_data", "expected_output"] | Display the entire expected output from the test case |

data_locator.test_case_data.expected["\<expected output key>"] | ["test_case_data", "expected_output", "\<expected output key>"] | Display a single key from the expected output |

data_locator.test_case_output | ["test_case_output", "output"] | Display the entire output from the test case output |

data_locator.test_case_output["\<output key>"] | ["test_case_output", "output", "\<output key>"] | Display a single key from the output |

data_locator.trace["\<node id from the trace>"].input | ["trace", "\<node id from the trace>", "input"] | Display the entire input from a single part of the trace |

data_locator.trace["\<node id from the trace>"].input["\<input key>"] | ["trace", "\<node id from the trace>", "input", "\<input key>"] | Display a single key from the input of a part of the trace |

data_locator.trace["\<node id from the trace>"].output | ["trace", "\<node id from the trace>", "output"] | Display the entire output from a single part of the trace |

data_locator.trace["\<node id from the trace>"].output["\<output key>"] | ["trace", "\<node id from the trace>", "output", "\<output key>"] | Display a single key from the output of a part of the trace |

data_locator.trace["\<node id from the trace>"].expected | ["trace", "\<node id from the trace>", "expected"] | Display the entire expected output from a single part of the trace |

["trace", "\<node id from the trace>", "expected", "\<expected key>"] | data_locator.trace["\<node id from the trace>"].expected["\<expected key>"] | Display a single key from the expec output of a part of the trace |

data_locator helper instead of manually creating the data_loc array.

Customizing the Annotation UI per question

Sometimes, you have certain questions in an evaluation rubric that are relevant only to a specific part of the test case, test case output or trace. For instance, you might ask a question specifically about the “completion” or “reranking” step in the trace. In that case you can create aquestion_id_to_annotation_config mapping that lets you override the annotation config for a specific question ID:

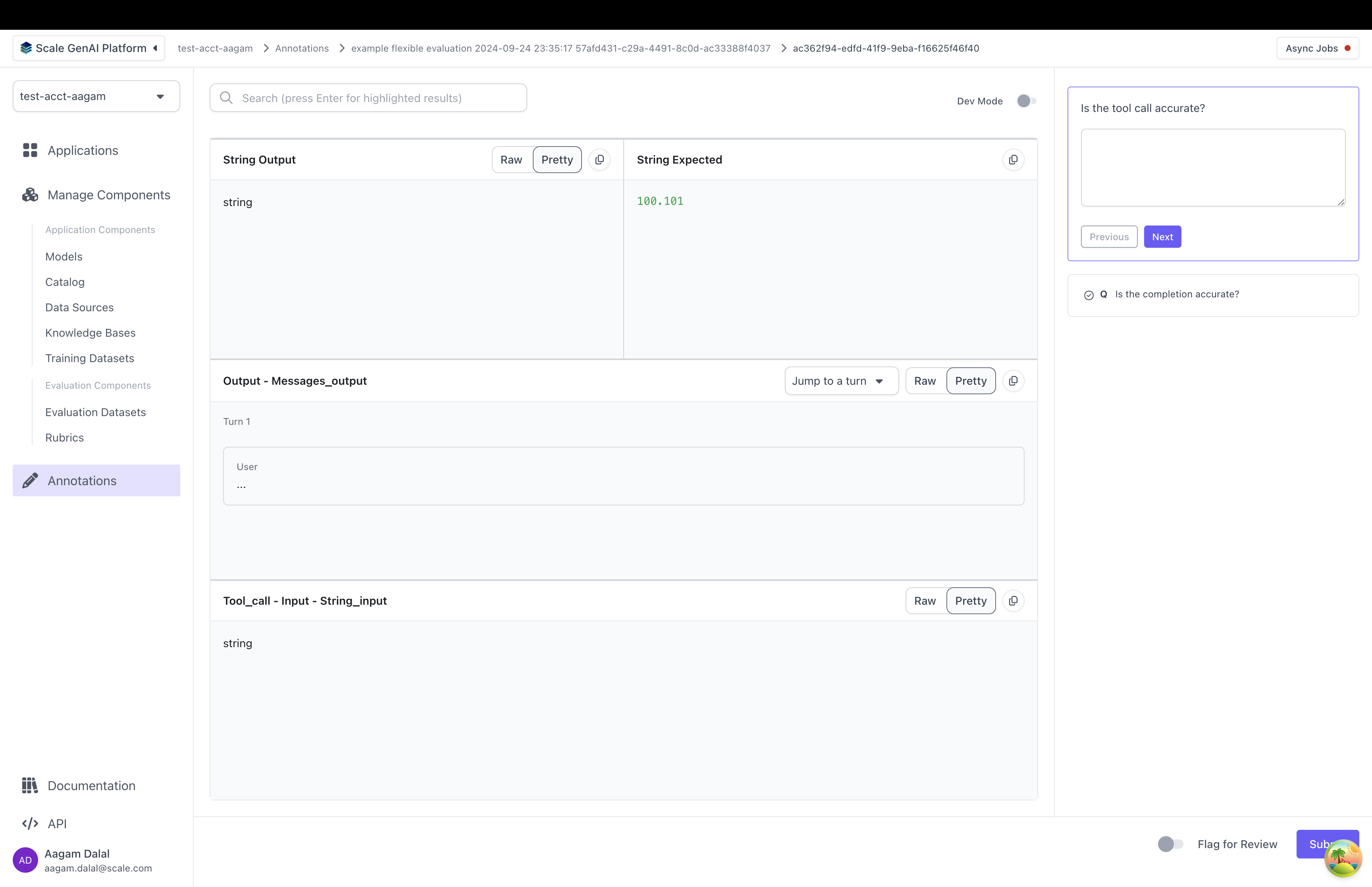

Dev Mode

SGP also supports “Dev Mode” which allows an annotator to view all the inputs, outputs and the full trace all at once. You can toggle Dev Mode by clicking on the top right in the annotation UI: